This is a guest post by Alex Cioc, who is a sophomore at Caltech, working on new data visualization techniques.

In the era of “Big Data” science, the real challenges come not from the sheer volume of data obtained but rather from the complexity of these data. For example, in a typical sky survey we measure tens or even hundreds of attributes for each detected source. The data represent vectors in a parameter space of that many dimensions. Visualizing such data is a highly non-trivial task. Yet, this visualization is key in analysis.

Ideally, we can solve this issue with our own intuitions, by utilizing the great pattern recognition engines in our brains – our inerrant abilities to spot trends, correlations, and outliers in a specific landscape. If we can create a bridge between the quantitative content of data and our understanding, we can form an effectively visualization engine. We already use 2-dimensional plots, and even 2-D projections of 3-D data representations. However, what do we do if the data space is more than 3 dimensions? Answering this question is the critical issue in “Big Data” science, astronomy included.

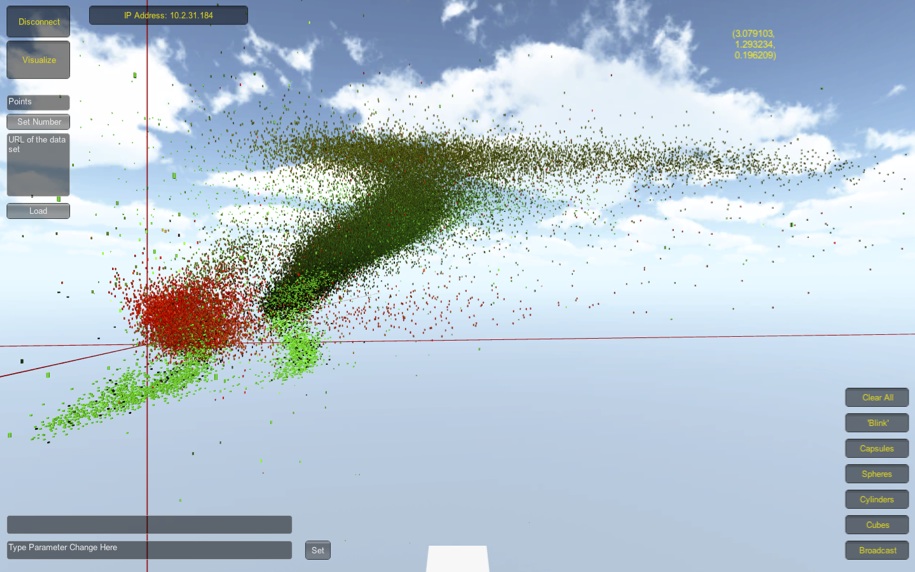

Our prototype, which uses the Unity3D™ game development engine, can currently render 100,000 data objects in about 15 seconds on a mid-2011 Macbook Air.

Our group at Caltech has been experimenting with the use of immersive virtual reality (VR) spaces as a data visualization platform. The initial efforts were performed under the auspices of the Meta-Institute for Computational Astrophysics (MICA; Farr et al. 2009, Djorgovski et al. 2013). Above and beyond any good 3-D data-plotting package, this form of visualization delivers user immersion. As humans, we are “optimized” to interact within a 3-D world. A handy platform for such 3-D exploration is virtual worlds, such as the Second Life™ and its open-source counterparts that use the Open Simulator (OpenSim) platform. Thus, a scientist can “walk” into their data, while interacting and collaborating with their colleagues in the same virtual space. Equally notable, such methods have a low barrier of entry – these virtual worlds offer free access and can be accessed through any mainstream desktop or laptop computer. Furthermore, they allow for the encoding of up to nine dimensions of a data space using the XYZ positions, RGB colors, transparency (alpha layer), size, and shape of 3-D data objects. Additional dimensions may also be encoded through animation (e.g., spinning or pulsation), textures, or other such methods.

Nevertheless, these virtual worlds have technical limitations and drawbacks, and, as a result of their game-like appearances, sometimes have subjective stigmas attached to them. With that in mind, we started to develop a standalone 3-D data browser that can be accessed either as a standalone program for Mac/ Windows/ Linux, or through any standard Web browser. We used the Unity3D™ game development engine because it is a very efficient, popular, commercial engine.

Our prototype data browser (Cioc et al. 2013) uses the same approach to encode multiple data dimensions, allows multiple forms of user control, and allows for the loading of local or external data sets. Cross-platform users, which are represented by small cubes, can interact within the same space and control what they see. On a mid-2011 Macbook Air, our application can render 100,000 data objects in about 15 seconds. The same computer can render over half a million 3-D data object, as is shown in the figure below.

As soon as we feel that it is ready and robust enough for public distribution, the data browser will be freely available. We hope that it will help adoption of immersive and collaborative 3-D and VR data visualization tools for “Big Data” science and that it can act as a step towards solving the big problem of “Big Data” science. Stay tuned!

Wonderful. Looking forward to the public distribution of the data browser!

-Jorge

Wonderful. Looking forward to the public distribution of the data browser!

-Jorge

I wonder if the restrictions from using a commercial engine outweigh the benefitsexploration in comparison to using something like the rgl package in R?

I can see some benefit in some kind of collaborative 3-d data exploration session, but is it worth the effort – will people really be bothered to do this often enough? Plus, if I recall, unity3d doesn’t work on linux…

@Philip

Using a commercial engine allows for 3D exploration as opposed to a more restricted set of movement. In addition, Unity3D easily ports to every platform (including Linux, which they added last summer and officially released in November).

@Philip, I personally would love data exploration session that is interactive, especially if it can project 9+ dimensions. With large data sets that can and need to be looked at as a function of many parameters, using current plotting packages can be limiting. Of course, the challenge is to make a platform that convinces people that it is worth using.

Excellent, didn’t realise unity3d had been ported to linux now.

This sounds like a very good idea. Where should we stay tuned ?

Question: Is there any sort of virtual collaborative environment to replace MICA? I’m looking for some sort of online watering hole to talk about astronomy and HPC.

Also, I’ve ended up in finance and it’s interesting that high level visualization isn’t used in the financial world. The main reason is that most scientific visualization involves looking at the global properties of data that can be mapped onto a three/four dimension space. In finance most data is hundred/thousand dimensional data and you are interested in exact numbers at a current location. Something else that happens is that in finance what you end up doing is taking a small dimension slice of a large space so the challenge involves 1) preparing the data for plotting and 2) providing a lot of contextual information in text. The actual plots are simple and intentionally unconventional. Something that has happened since the financial crisis is that people are (justifiably) scared about anything fancy. So people want to see a simple line plot in a simply power point. It turns out that getting a simple line plot is not that simple.

Also for presentations, people are not interested in data exploration. They are interested in data stories, so what you don’t want is to put someone in room where they are playing with data. What you want is to tell a story.

Heavy visualization is much more common in geology where you can map things to a three-d platform.

Also if you want something that is easily commercializable making it open source gives you a huge advantage. In any large corporation you need to spend a time of time getting any sort of business justification. Ironically, this works against “cheap” packages. If you have to spend $10 million to do something it’s worth a massive set of meetings to do something. If you want to spend $100, then it’s not going to get done, because no one is going to have the meetings to authorize you to spend $100. In that situation, if you can just download the package from the internet, it’s easy. Also, if it’s an open source package, you can get major “donations” from big companies. Our company heavily uses several open source packages, and we end up pushing out our bug fixes and enhancements back into the code pool. No one knows that we are doing it, sometimes including the maintainers of the packages themselves.

If you’re interested in interactive investigation of large multi-dimensional datasets, TOPCAT is another option (also seen before on astrobetter here). The rendering is less beautiful than what you get from a commercial 3d engine and it does not offer collaborative features, but it can cope easily with quite large data sets (million points in less than a second, depending on the details), it’s highly interactive, and has lots of options for multi-dimensional visualisation (2d/3d plots, colour coding, marker size/shape, error bars, vectors, …) amongst other things, I won’t go on. The new graphics features in the recent v4.0b release offer some improvements in this area, especially 3d navigation.

Apologies if it’s rude to advertise my tool on somebody else’s thread, but it does seem to be relevant to some of the followup comments above.

Following on Mark’s comment: I would add that TOPCAT allows you to interact directly with a number of public databases, such as those by the Virgo Consortium (i.e., the Millennium Simulations) and other various surveys. I believe TOPCAT offers a good way to get students going with research.

This is a great project. Is it already made public? I coudn’t find any info on the web.

I too would like to experiment with this tool. It’s now been more than 2 years since the original article. Seems to me that either the research has been abandoned or completed. Either way, the community could benefit by having access to the code.

Does anyone know if this is available to the public yet? I am in an Advanced 3D Visualization and Simulation class at my university and have to make a presentation to the class about data visualization. My professor has said as part of our presentation, he would like for us to find outside work that we can showcase and walk the class through in the Unity and maybe even hook it up to the Oculus Rift to get even greater immersion. If there is a download link or website where I could get this I would greatly appreciate it.

Thanks